This is the job opportunity you've been waiting for. Now, let me be clear: I am personally not hiring anybody. You're going to hire yourself.

How to Fix the Future: An Interview with Andrew Keen

Andrew Keen has spent the last decade writing critically about the digital revolution. “I’ve been called everything from a Luddite and a curmudgeon to the ‘Antichrist of Silicon Valley’,’’ he writes. In his newest book, How to Fix the Future, he maintains his skeptical stance toward big tech and big data but turns it towards positive case studies and solutions in progress. He’ll be speaking at the John Adams Institute in Amsterdam on May 24.

Andrew Keen has spent the last decade writing critically about the digital revolution. “I’ve been called everything from a Luddite and a curmudgeon to the ‘Antichrist of Silicon Valley’,’’ he writes. In his newest book, How to Fix the Future, he maintains his skeptical stance toward big tech and big data but turns it towards positive case studies and solutions in progress. He’ll be speaking at the John Adams Institute in Amsterdam on May 24.

Your book introduces us to technology’s ‘Four Horsemen of the Apocalypse.’ Who are they, and what hell hath they delivered unto us?

The Four Horsemen of the Apocalypse are the four largest internet companies—Facebook, Google, Apple and Amazon—who dominate not only the industry but the world economy. If you add Microsoft, they’re what journalist Farhad Manjoo called the “Frightful 5”… but I’m not sure it’s the right question. Yes, the big companies are influential but they’re not the only problem. The biggest problem is that the technology is running ahead of us as human beings. We’re not able to exercise our agency, to shape the world according to what we want, rather than what it wants.

The matter of ‘agency’ takes a central role in your book. What does it mean to you?

Agency means controlling your own fate, shaping your own history, determining how you want your world, your society, and your culture to appear, to be. At the moment, we have these very large, powerful, impersonal companies that seem to be almost out of control. We’re not sure how they work, we’re not sure what they know about us, and we’re not sure how they interact with governments. The technology itself sometimes is addictive, and almost seems to have a mind of its own, while human beings are struggling to keep up. We’re not quite sure how much or how little we should use technology: we need to understand it to keep our jobs, and yet it’s also replacing our jobs in terms of what we do and in terms of our labor. It’s a tricky situation. The challenge of course is agency, and that’s what the book is about: reclaiming our agency. It’s a book of examples about how we are actually doing that.

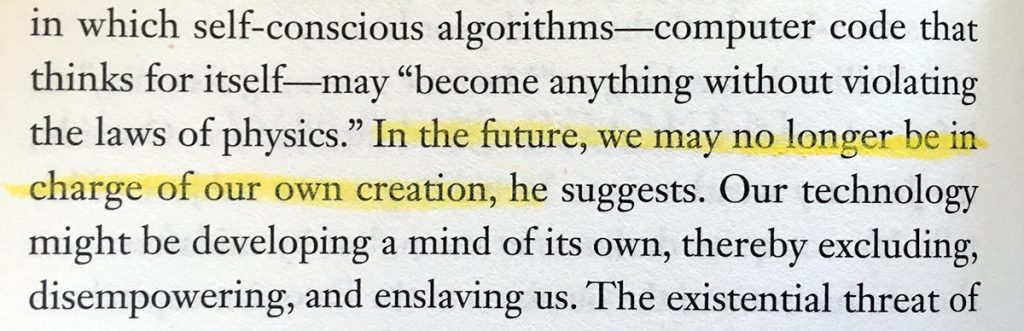

You contrast this with a statement by Ada Lovelace, the first person to recognize that computers had value beyond mere computation. She said that computers do not “originate” ideas. They don’t have goals, whereas we do. Do you foresee that this distinction will continue?

Some people think that computers will have algorithms that acquire agency. If they do, those computers will be our final invention because we’ll be enslaved by them, but, I don’t think they will. It’s not really a book about that, and I’m not technologically literate enough to make a call. I assume it’s not going to happen, certainly not for a few generations.

You pose the question to one of the experts you speak with: how can we fall back in love with the future? Were you able through this book to fall back in love?

Well, I was never in love with the future before, but that’s a good question. No, I didn’t fall back in love with it, but I’ve become a little bit more hopeful. Maybe the ‘love’ metaphor is an interesting one; we shouldn’t fall in love with the future, it’s too dangerous. We need to keep a distance, have a mature relationship. We’ve often had a very teenage relationship with the future, either loving it or hating it. I think we need a more adult relationship, more thoughtful and a little bit more distant. And a bit more reciprocal in the sense that we’re not thinking that the future’s going to solve everything.

We shouldn’t fall in love with the future, it’s too dangerous

The problem with Silicon Valley in particular is that it presents the future as something which would be fixed. All our problems—scarcity, inequality—all these things will be fixed by the future, and of course that is profoundly wrong. When anyone promises that, it’s always wrong, that’s been proven time and time again in history. This is just one more chapter of how exaggerating the promise of the future is so dangerous. This is why, in the book, I use Thomas More’s book Utopia as a reference point, because it’s such an important example to bring people down to earth about the future. It’s sort of half-theory and half-satirical, half-believing in the future and half-skeptical, and I think that’s healthy. Obviously my book isn’t in that league, but it addresses the future in a more ambivalent way.

My book is designed to get people to think about what kind of future they want, in terms of their work, their organization, their relationship to government, to data, to big tech companies, and so on. This future is imminent, and these issues aren’t going away.

How can we best use our agency to create the future we want?

By doing small things. First of all, we’re all very impatient, and think that things should be fixed immediately, but there’s no app to fix the future. We can do lots of things as individuals: Many of us are parents, so we should be educating our children about the uses and misuses of this technology. Some of us are teachers and we can bring many of these ideas into the classroom. We’re all citizens so we should be voting for politicians—if we’re lucky enough to live in democracies—who take strong positions on this, whether it’s on antitrust or guaranteed minimum income. We may be entrepreneurs, and we can come up with new ideas for tech companies that don’t exploit people as much as the current companies do. And we’re all consumers as well, so we need to be more demanding and also more realistic: we can’t have stuff for free. We’ve been tricked as consumers into believing that we almost have the right to free stuff, and if we don’t get it for free then there’s something wrong with the product. Actually the best products aren’t free, they’re the ones which are high quality and transparent, which we pay for.

We wear many hats, so we can do many things.

Google’s motto was famously ‘Don’t be evil,’ but that hasn’t stopped them from becoming one of the Four Horsemen. How does it go wrong, or more importantly, how can you stop it from going wrong further down the line?

One developer in the book, Tristan Harris, has become quite prominent in Silicon Valley, and he believes that developers should sign a kind of ‘Hippocratic Oath’ like doctors do—to do no harm. I’m not convinced that it’s realistic or viable, but it’s an interesting idea and it gets you thinking. But certainly developers or entrepreneurs shouldn’t be designing products that are purposefully addictive. They should have a moral responsibility.

In particular Facebook seems to be purposefully addictive. People check their account hundreds of times a day, and they’re addicted to this always-on economy of emotions. Facebook figured out our emotions, our greatest flaws, our love of ourselves, our narcissism, which is having a very bad impact. Designers have a moral responsibility, but in particular entrepreneurs do, because they decide what gets built.

How to Fix the Future: An Interview with Andrew Keen was published on the John Adams Institute website in advance of his talk in Amsterdam.

How to Fix the Future: An Interview with Andrew Keen was published on the John Adams Institute website in advance of his talk in Amsterdam.